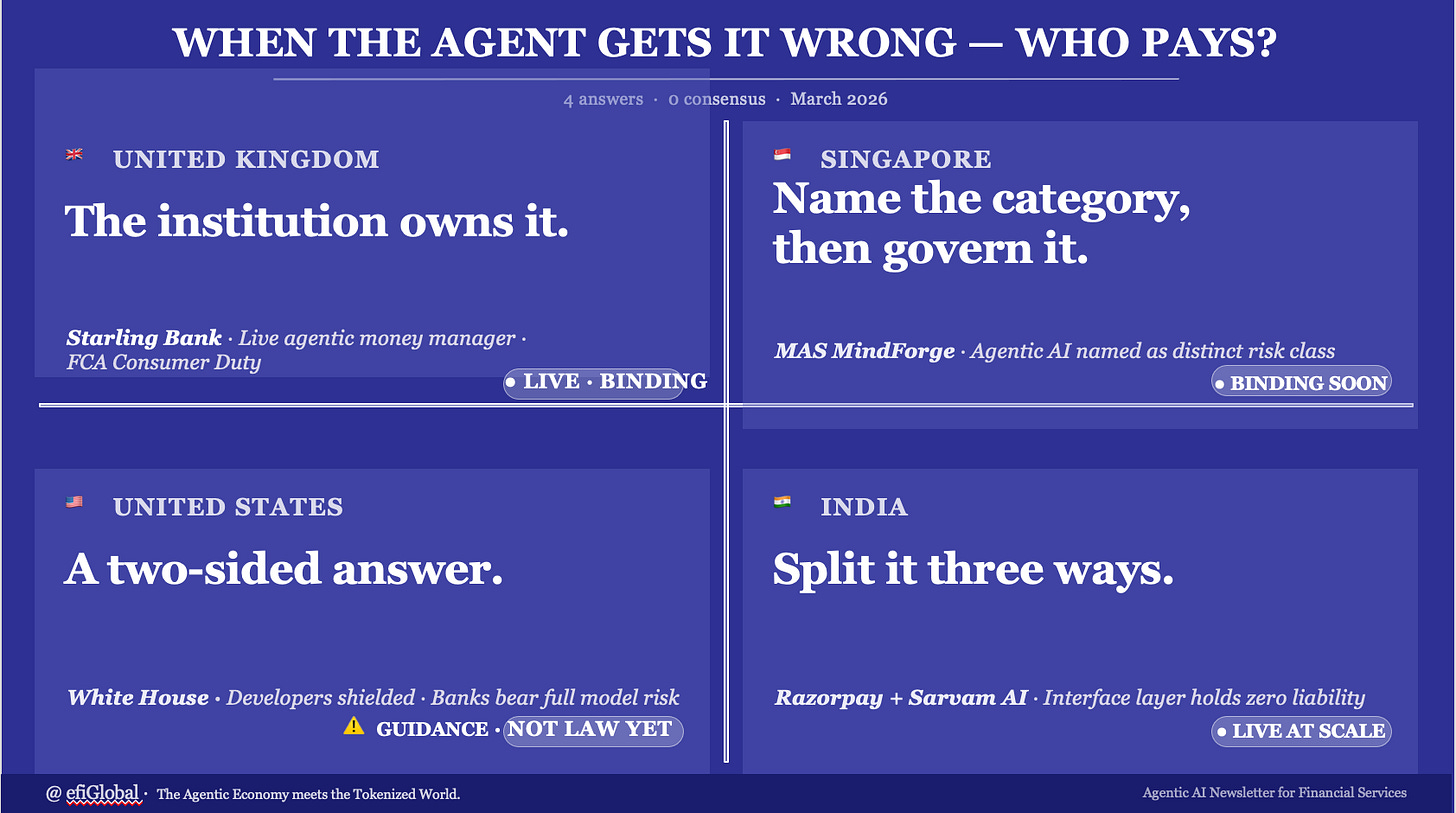

When the Agent Gets It Wrong, Who Pays?

The liability question agentic finance cannot avoid - and four different answers from one week

One of the defining questions emerging in the Agentic Economy is deceptively simple: when an AI agent acts on someone’s behalf and gets it wrong, who is accountable?

It sounds like a legal edge case. It is not. As autonomous agents move from demos to production - executing payments, managing money, making compliance decisions - the liability question is becoming the governance question that determines which institutions can scale agentic AI safely and which cannot.

In a single week in March 2026, four separate developments each answered it differently. A UK bank, an Indian payments partnership, a White House policy framework, and a Singapore regulatory handbook all took a position.

There is no consensus yet. But these four answers are already becoming reference points that will shape the industry’s governance architecture for years.

The rails for autonomous finance are live. The liability architecture for those rails is being written right now, by the institutions and regulators willing to commit to an answer.

Answer 1: The Institution Bears It (Starling Bank)

On March 25, Starling Bank went live with the UK’s first agentic AI money manager. ‘Starling Assistant’ does not advise customers - it acts. It sets up recurring transfers, creates savings accounts, interrogates spending patterns, and executes financial decisions autonomously within customer-defined parameters.

The governance choice Starling made is the answer to the liability question: the institution owns it. All customer data stays within Starling’s own Google Cloud environment, never used to train external models. The CIO leading the project chairs the Bank of England AI Taskforce. The deployment is opt-in only. Every design decision signals the same thing: Starling is treating the full accountability surface as its own.

This is the most conservative answer - and the most credible one for a regulated deposit-taking institution operating under the FCA’s Consumer Duty. When an agent with account-writing permissions makes an error, the customer will look to the bank. Starling has built with that reality in mind from day one.

The watch-out is not regulatory. It is competitive. Starling’s assistant quality is built on eight years of proprietary transaction data. Any institution that thinks it can replicate this by wrapping a third-party model in a chatbot interface is misreading why this deployment works.

Answer 2: Split It Three Ways (Razorpay + Sarvam AI)

The same week, on the other side of the world, Razorpay and Sarvam AI deployed end-to-end voice commerce across 11 Indian languages. A customer can discover a product, order it, and complete payment within a single conversation - without touching a screen. Twenty-plus merchant partners went live within a week of the announcement.

The headline is not the technology. It is the liability framework they published alongside it. For the first time in an agentic commerce deployment at production scale, accountability was explicitly divided across three layers: Razorpay holds payment security liability. The merchant handles fulfilment disputes. Sarvam, as the interface layer, bears no financial liability.

This three-way split is architecturally elegant and commercially rational. It mirrors how liability is allocated in traditional payment networks - acquirer, merchant, card scheme - and extends that logic to the agentic layer. Regulators and contract negotiators across the industry will reference this framework, whether or not they cite it.

The pressure point is Sarvam’s zero-liability position. As the interface layer that actually interprets customer intent and instructs the agent, Sarvam sits at the point where most errors will originate - mishearing, misranking, misinterpreting. Concentrating zero liability at the point of highest failure risk is a position that will be tested.

Answer 3: A Two-Sided Answer (White House)

On March 20, the White House released its National Policy Framework for AI - four pages of legislative recommendations, not binding rules. It is ‘light-touch’ in headline terms, but the operational implication for financial institutions is anything but light. The framework delivers a two-sided answer to the liability question, and the two sides point in opposite directions depending on who you are.

For AI developers and model providers, it is protective: the framework explicitly recommends that states be barred from holding AI developers liable for how third parties misuse their models. This is a deliberate shield for the model layer - OpenAI, Anthropic, and other foundation model providers cannot be held responsible under state law for what a deploying bank does with their technology.

For deploying financial institutions, it is the opposite. The framework channels all AI accountability through existing sector-specific regulators - OCC, Fed, FDIC, CFPB - and their existing model risk management expectations now apply fully to agentic systems. A bank deploying a third-party LLM for credit decisions cannot disclaim accountability for that model’s outputs. The testing burden - pre-deployment validation, production drift monitoring, third-party model governance - lands entirely on the deploying institution. QA and testing teams become the compliance front line.

The fintech dimension is where the framework creates a structural tension it does not resolve. OCC, Fed, and FDIC model risk management guidance applies to chartered banks. Non-bank fintechs - payments companies, BNPL providers, embedded finance platforms - face the CFPB if consumer-facing (which has been explicit: ‘there are no exceptions to consumer financial protection laws for new technologies’) and the FTC for data and advertising practices, but not the same formal model governance expectations that banks bear. The Bank Policy Institute has flagged this directly: banks face burdensome model risk oversight that their non-bank fintech competitors do not. The White House framework does not close this gap - it routes through the existing asymmetric structure and leaves it in place.

One further caveat that matters: this is not law. It is a set of legislative recommendations to Congress. No new legal obligations exist yet. The preemption of state AI legislation - the framework’s most operationally significant provision for multi-state institutions - remains legally uncertain and will be tested in court. Firms cannot rely on it as a compliance defence today.

Answer 4: Name the Category, Then Govern It (MAS)

The same week, the Monetary Authority of Singapore released MindForge - the output of Project MindForge Phase 2, a two-year industry-regulator collaboration. The toolkit includes a 26-page executive handbook, a 173-page operational handbook, and a case study compilation. It is the most comprehensive answer to the liability question from a regulatory body anywhere, and it makes one move that none of the other answers make: it explicitly names agentic AI as a distinct risk category, separate from both traditional AI and generative AI.

That naming matters more than the page count. Every regulatory framework before MindForge has addressed AI governance in general terms - principles of fairness, transparency, and accountability. MindForge says explicitly that systems that plan, execute, and use tools autonomously across multiple steps carry materially different governance requirements than systems that generate text. The distinction will travel to other regulators.

The 24-institution consortium that developed MindForge - spanning banks, insurers, and asset managers including DBS, Julius Baer, BlackRock, and Prudential, with technology partners including AWS, Google Cloud, Microsoft, and Nvidia - means the toolkit reflects actual deployment experience, not regulator theory. DBS’s contribution is instructive: their PURE framework (Purposeful, Unsurprising, Respectful, Explainable) for ethical AI governance, their phased approach of starting Gen AI adoption with internal use only under high human oversight, and their cross-functional Responsible AI Taskforce structure are documented as reference implementations. These are the benchmarks against which MAS examiners will implicitly measure institutions. A firm that cannot articulate why its approach differs - or why it is less mature - will struggle.

On liability specifically: MindForge does not assign legal liability - it is a risk management framework, not an enforcement mechanism. What it does is make the deploying financial institution accountable for AI governance across the full lifecycle, including for third-party models. This mirrors the White House framework’s logic at the deployment layer, but with far more operational specificity. MAS’s proposed Guidelines on AI Risk Management, currently in public consultation, will make these expectations binding when finalised. Treating MindForge as voluntary today is a timing error.

The Gap Between the Answers

Four answers. Zero consensus. And the gap between them is not merely philosophical - it is a commercial and regulatory risk for any institution operating across jurisdictions.

Starling’s answer (the institution owns it) is the most credible for consumer-facing agentic deployments in regulated markets. It is also the most expensive to implement correctly.

Razorpay’s answer (split it contractually) is the most commercially scalable - but it concentrates risk at the interface layer, which is precisely where most failures will occur in voice-first systems.

The White House answer is the most complex - deliberately so. It protects the model layer (developers cannot be held liable for third-party misuse) while loading the testing and governance burden onto deploying institutions through existing sector regulators. For banks, full accountability. For many non-bank fintechs, a lighter and more ambiguous burden. And for everyone: no new law yet, only recommendations to Congress.

MAS’s answer (name the category, then govern it) is the most durable - because it acknowledges that agentic AI is not the same governance problem as generative AI, and builds a framework specific to the difference.

The question for every financial services leader is not which framework applies to them. It is which of these four answers their own governance architecture most closely resembles - and whether that is a deliberate choice or an accidental one.

What to Watch

The liability question will be answered again next week, and the week after. Watch for: the first FCA examination that applies Consumer Duty to an agentic deployment; the first dispute arising from a Razorpay-style three-way liability split; the first US bank that discloses its third-party LLM governance framework under examination pressure; the first non-bank fintech that faces enforcement under existing consumer protection law for an AI output it claimed was the vendor’s responsibility; and the first institution outside Singapore that adopts MindForge’s agentic-specific risk categorisation proactively.

The institutions that define those answers - rather than wait for regulators to define them - will hold the governance advantage that compounds over time. That advantage is worth more than any benchmark performance number.

- @efiglobal

This piece is drawn from Edition 42 of Agentic AI in Financial Services - a weekly newsletter curating AI transformation across financial services, published every week for 40,000+ subscribers. This newsletter is a human-AI collaboration involving seven AI agents and my own curation and refinement. Subscribe here.

The Agentic Economy meets the Tokenized World. This is the intersection I cover with a fintech lens.

Thought of sharing https://substack.com/@deepakondecisions/note/c-232891058?r=716iko&utm_source=notes-share-action&utm_medium=web